Using It, Without Feeling Used By It

What decades of mapping tech can teach us about co-existing with AI in the workplace

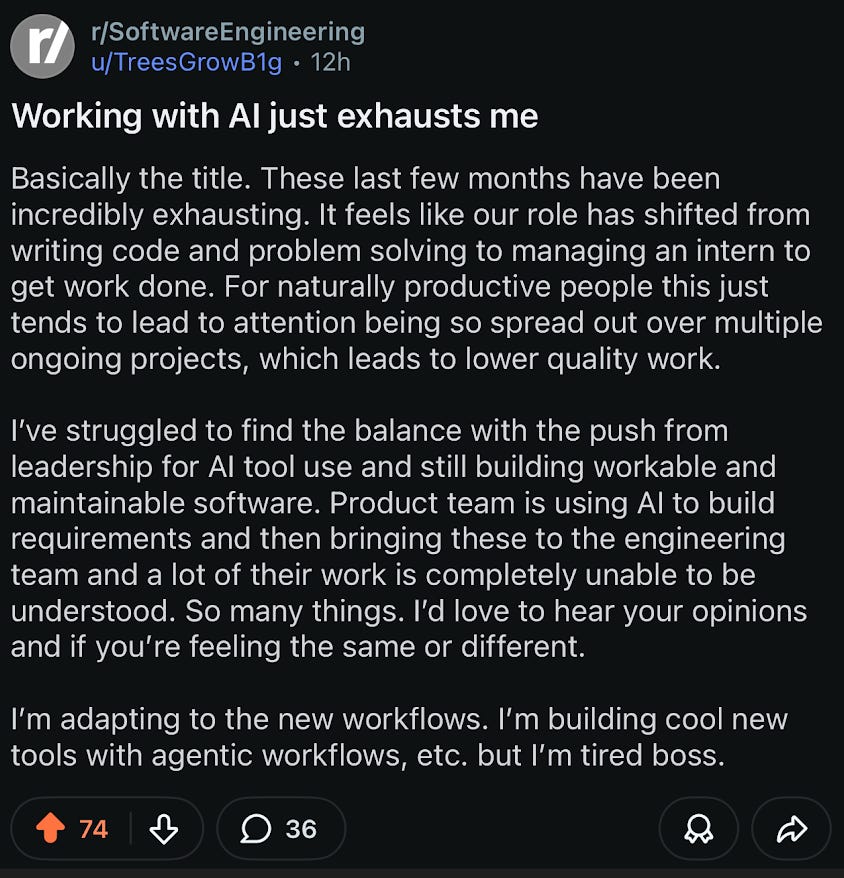

There’s a lot of frustration out there on Reddit and here on Substack.

Developers saying they are already tired of working with AI for their projects. “It’s like managing some interns, instead of creating something fun.”

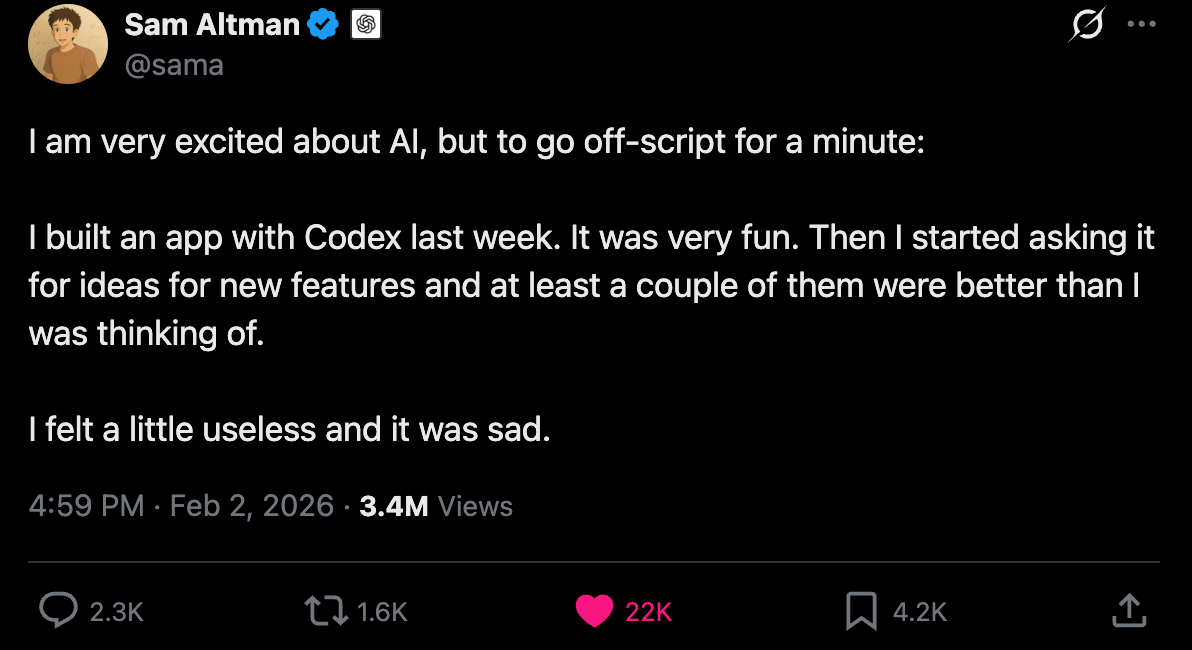

Software engineers saying they feel "sad" because the ghost-in-the-machine can do a better job and they no longer feel like there is much creativity involved anymore to solving problems in code.

But here’s another way to think about there. This is more for those are making these tools as well as making the tools that people enjoy using without them being used by it.

Whether you use Waze, Google Maps, Apple Maps, Garmin or what have you, map technology has become ubiquitous in how we navigate the cities we live in but especially the cities we do not. For those few weeks to months of the year we are in foreign places, we rely on those tools to help us get from A to B, find points of interest crowdsourced by what others have said, and avoid any disaster experiences we hope to avoid.

I think that’s what many folks building AI for coding originally intended. For the technology to be co-pilots in a literal and metaphorical sense. Even the word is subversively subjective (and not in a nefarious way) but in a way that suggests you are in the driver’s seat. Or in this case, the main pilot in the cockpit.

But whatever patchwork of AI systems we’ve ended up with, from point solutions that help with specific dev tasks to full-on automation that writes entire codebases, the experience for a lot of developers has gone from “this is a helpful co-pilot” to “I’m babysitting a machine that doesn’t understand what I actually want.” The nuisance and the feelings of displacement are real.

And maybe part of the reason why is because in coding, recursion can be super fast. Things move and change at a pace that’s hard to keep up with, let alone stay ahead of.

Compare that to navigating in our cars. Level 1 to Level 5 automation is... well... slower than we ever thought imaginable.

Look at Elon and Tesla’s Full Self-Driving as a good example. Every year a new milestone was promised and every year the goalposts moved. “Robotaxis by 2020” became “maybe supervised FSD by 2024” and even now in 2026 we’re still not at full autonomy in any meaningful way. That’s IRL physics. The real world pushes back. Roads are messy, pedestrians are unpredictable, regulations vary city by city, weather changes everything.

But coding doesn’t have that friction. Pure software and AI diffusion in it moves at a pace the physical world simply cannot. Which means developers didn’t get the slow on-ramp that drivers got. They got the full thing all at once.

But I digress.

It's Not A Perfect Analog, But What Is Anyway?

I’d like our AI tools and systems to work the way mapping tech works for us when we drive. And to the extent that we can adopt this model for all our AI tools for human-augmentation, I think we’ll make it out okay.

Here’s what I mean.

You Pick the Destination, AI Picks the Route

In the future, as the cost of execution and the cost of production in the creation of most digital widgets approach zero (as many others have said), we should make damn sure that we know what we want. Even more so than before.

Here’s an old Turkish proverb:

“No matter how far you have gone down a wrong road, turn back”

While it’s a good lesson in sunk costs, it makes my point for me somewhat.

Think of this: You open Google Maps and start navigating to the place you booked for dinner. Maps is telling you to make those 2 left turns because it’s going to be the fastest route.

But you don’t want to do that and instead just make 1 left even if it takes 3 minutes longer.

Or you don’t do that at all and take the more direct route even if it goes through the school neighbourhood and you can only drive 15mph.

The point is, we’ve already been using our “AI” to help us navigate.

In building Products, we can call it the Definition of Done. Or in most other industries it’s the requirements doc. Whatever you call it, you are in the driver’s seat on where the destination is (the requirements and outcomes), but how you get there is up to you.

And here’s the most interesting part. Google Maps doesn’t let you lose sight of where you want to go. It just recalculates. It doesn’t scream at you that you missed a turn, it just corrects course and gives you the best option from that point forward.

That’s the ideal I think we should get to with our various “co-pilots.”

You define the what. The AI helps with the how. And when you go off-script, it adjusts. No judgment, no displacement, no taking over the wheel. Just recalculating.

What We’ve Already Traded Away

With that said, there are pitfalls that we can learn from mapping tech in our lives.

In recent years, perhaps the past year or so, we’ve realized that what we’ve traded for convenience and a guiding hand has been not so great for our overall cognitive health. Many more studies have been surfacing about the dangers of over-reliance on hitting ‘Start’ on CarPlay. We no longer see the world as we did before and more importantly, while we navigate the arteries of the world very well, we fail to use our neural pathways in the same way as before. Certainly not as much.

Here’s what recent studies have said:

Across these studies123, the pattern is that:

GPS and turn-by-turn tools offload cognitive demands, reducing the need to build and recall one’s own mental maps of environments.

This offloading appears linked to weaker spatial memory performance and reduced higher-level strategies over time.

Here’s the Takeaway

We’ve already been living with “AI co-pilots” for years. Mapping tech is the longest-running experiment we have in humans augmented by intelligent systems. It works when you stay in the driver’s seat.

The best AI tools should work like Google Maps, not autopilot. You pick the destination. The AI suggests the route. When you go off-script, it recalculates without judgment. It doesn’t take over the wheel.

Developers aren’t frustrated because AI is bad. They’re frustrated because the UX got the relationship wrong. The co-pilot became the pilot and nobody asked if that was okay.

Speed is part of the problem. In driving, we got a slow on-ramp from Level 1 to Level 5. In coding, there was no on-ramp. Developers got the full thing all at once and now they’re processing displacement at the same speed as the tools themselves.

But the GPS warning is real. Over-reliance on turn-by-turn navigation has measurably weakened our spatial cognition. If we’re not careful, over-reliance on AI coding tools could do the same to our problem-solving muscles. The convenience is worth it only if we stay conscious of what we’re trading away.

Know your destination. As production costs approach zero, the most valuable skill isn’t execution. It’s knowing what you want to build and why. The Turkish proverb applies: no matter how far you’ve gone down the wrong road, turn back. AI makes the turning cheaper. But you still have to know which road is the wrong one.

https://www.nature.com/articles/s41598-020-62877-0

https://pmc.ncbi.nlm.nih.gov/articles/PMC8032695/

https://www.sciencedirect.com/science/article/abs/pii/S107158191830171X

Treating AI as a co-pilot rather than the driver emphasizes the importance of maintaining human direction and decision-making while leveraging intelligent tools effectively

I really resonate with the way you compared coding recursion to the slow crawl of self-driving cars it’s a great point that because software moves so fast, the "babysitting" feeling hits developers way harder than it hits drivers. :)

Do you think this "managerial fatigue" is just a temporary phase while the tools are still "interns," or are we reaching a point where the joy of problem-solving is being permanently replaced by the chore of auditing AI?

We aren't in the same field, but I'd love to support each other feel free to subscribe if you like my content too!

Jorrit